A More Systematic Approach to Biological Risk

Management of emerging risks in life science and technology requires new leadership and a sober assessment of the legacy of Asilomar.

Management of emerging risks in life science and technology requires new leadership and a sober assessment of the legacy of Asilomar.

Abstract: Technological and social forces are allowing the growth of science outside a professionalized context. The Internet, mobile devices, and public availability of big data are technological facilitators of “citizen science”, in part through enabling data sharing, analysis, and communication. These technological changes now allow the entire scientific process, from funding and development of a research agenda, to conduct, analysis and dissemination and application of findings, to take place without the involvement of any science professionals or research-related institutions. However, most ethical and regulatory frameworks for biomedical science arose from concepts of obligations of professionals and (largely not-for-profit) institutions. We will discuss current examples of “citizen science” in biology and clinical research, and the ethical and policy implications.

About the Speaker: Mildred Cho is a Professor in the Division of Medical Genetics of the Department of Pediatrics at Stanford University, Associate Director of the Stanford Center for Biomedical Ethics, and Director of the Center for Integration of Research on Genetics and Ethics. She received her B.S. in Biology in 1984 from the Massachusetts Institute of Technology and her Ph.D. in 1992 from the Stanford University Department of Pharmacology. Her post-doctoral training was in Health Policy as a Pew Fellow at the Institute for Health Policy Studies at the University of California, San Francisco and at the Palo Alto VA Center for Health Care Evaluation. She is a member of international and national advisory boards, including for Genome Canada, the March of Dimes, and the Board of Reviewing Editors of Science magazine. Her current research projects examine ethical and social issues in research on the human genome and microbiome, synthetic biology and genome editing, and the ethics at the intersection of clinical practice and research.

The United States needs to build a better governance regime for oversight of risky biological research to reduce the likelihood of a bioengineered super virus escaping from the lab or being deliberately unleashed, according to an article from three Stanford scholars published in the journal Science today.

"We've got an increasing number of unusually risky experiments, and we need to be more thoughtful and deliberate in how we oversee this work," said co-author David Relman, a professor of infectious diseases and co-director of Stanford's Center for International Security and Cooperation (CISAC).

Relman said that cutting-edge bioscience and technology research has yielded tremendous benefits, such as cheap and effective ways of developing new drugs, vaccines, fuels and food. But he said he was concerned about the growing number of labs that are developing novel pathogens with pandemic potential.

For instance, researchers at the Memorial Sloan Kettering Cancer Center, in their quest to create a better model for studying human disease, recently deployed a gene editing technique known as CRISPR-Cas9 on a respiratory virus so that it was able to edit the mouse genome and cause cancer in infected mice.

"They ended up creating, in my mind, a very dangerous virus and showed others how they too could make similar kinds of dangerous viruses," Relman said.

Scientists in the United States and the Netherlands, conducting so-called "gain-of-function" experiments, have also created much more contagious versions of the deadly H5N1 bird flu in the lab.

Publicly available information from published experiments like these, such as genomic sequence data, could allow scientists to reverse engineer a virus that would be difficult to contain and highly harmful were it to spread.

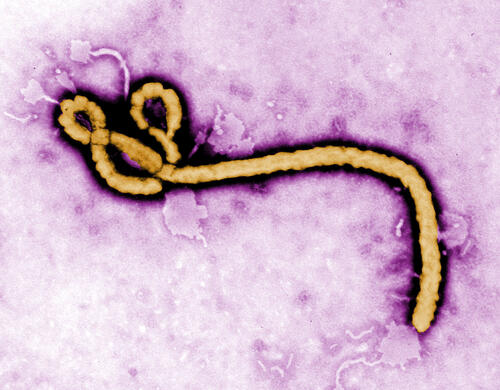

And a recent spate of high-profile accidents at U.S. government labs – including the mishandling of anthrax, bird flu, smallpox and Ebola samples – has raised the specter of a dangerous pathogen escaping from the lab and causing an outbreak or even a global pandemic.

"These kinds of accidents can have severe consequences," said Megan Palmer, CISAC senior research scholar and a co-author on the paper. "But we lack adequate processes and public information to assess the significance of the benefits and risks. Unless we address this fundamental issue, then we're going to continue to be reactive and make ourselves more vulnerable to mistakes and accidents in the long term."

Leadership on risk management in biotechnology has not evolved much since the mid-1970s, when pioneering scientists gathered at the Asilomar Conference on Recombinant DNA and established guidelines that are still in use today.

Palmer said that although scientific self-governance is an essential element of oversight, left unchecked, it could lead to a "culture of invincibility over time."

"There's reliance on really a narrow set of technical experts to assess risks, and we need to broaden that leadership to be able to account for the new types of opportunities and challenges that emerging science and technology bring," she said.

Relman described the current system as "piecemeal, ad hoc and uncoordinated," and said that a more "holistic" approach that included academia, industry and all levels of government was needed to tackle the problem.

"It's time for us as a set of communities to step back and think more strategically," Relman said.

The governance of "dual use" technologies, which can be used for both peaceful and offensive purposes, poses significant challenges in the life sciences, said Stanford political scientist Francis Fukuyama, who also contributed to the paper.

"Unlike nuclear weapons, it doesn't take large-scale labs," Fukuyama said. "It doesn't take a lot of capacity to do dangerous research on biology."

The co-authors recommend appointing a top-ranking government official, such as a special assistant to the president, and a supporting committee, to oversee safety and security in the life sciences and associated technologies. They would coordinate the management of risk, including regulatory authorities needed to ensure accountability and information sharing.

"Although many agencies right now are tasked with worrying about safety, they have got conflicting interests that make them not ideal for being the single point of vigilance in this area," Fukuyama said.

"The National Institutes of Health is trying to promote research but also stop dangerous research. Sometimes those two aims run at cross-purposes.

"It's a big step to call for a new regulator, because in general we have too much regulation, but we felt there were a lot of dangers that were not being responded to in an appropriate way."

Strong cooperative international mechanisms are also needed to encourage other countries to support responsible research, Fukuyama said.

"What we want to avoid is a kind of arms race phenomenon, where countries are trying to compete with each other doing risky research in this area, and not wanting to mitigate risks because of fears that other countries are going to get ahead of them," he said.

The co-authors also recommended investing in research centers as a strategic way to build critical perspective and analysis of oversight challenges as biotechnology becomes increasing accessible.

Abstract: Faster evolving technologies, new peer adversaries, and the increased role of non-government entities changes how we think about decisions to develop and adopt new technology. Uncertainties about technology “shelf life,” adversary intentions, and dual uses of technology complicate these decisions. This seminar will discuss the use of mathematical models and optimization methods to provide insight on technology policy issues. These issues include: balancing risk and affordability during technology research and development; timing technology adoption; and understanding adversary responses to new technologies. Examples will be discussed from offensive cyber operations and synthetic biology. We will conclude by discussing implications for how policy analysts and policy makers think about technology and security.

About the Speaker: Philip Keller is a National Defense Science and Engineering Graduate Fellow at Stanford. He is completing his PhD in Management Science & Engineering. He studies technology policy problems posed by new technologies. His research is highly interdisciplinary, drawing on methods from engineering risk and decision analysis, game theory, and operations research. His professional experience includes conducting studies and analysis for the Department of Defense and the Department of Homeland Security at RAND and the Homeland Security Studies and Analysis Institute. Previous study topics include unmanned aircraft operations; nuclear terrorism; offensive cyber operations; and military force structure. Philip holds a BS in Mathematics and an MS in Defense and Strategic Studies.

Abstract: Biotechnology is in a transition from artisanal tools and methods to computer-controlled, high-throughput systems that allow research and development at industrial scale. This digitization is also radically reducing technical and economic barriers, empowering a new generation of young designers to do bioengineering on par with major companies but at a fraction of the cost, and prompting a re-think of the entire industry, including business models, intellectual property, ethics and biosecurity. This shift has the potential to disrupt R&D on a global scale. This lecture provides an overview of the issues and opportunities.

About the Speaker: Autodesk Distinguished Researcher Andrew Hessel is spearheading the development of tools and processes that facilitate the computer-aided design and computer-aided manufacture of living creatures and systems. As a 2015-2016 AAAS-Lemelson Invention Ambassador, he also encourages others to explore invention and innovation in biological engineering. Andrew is active in the iGEM and DIYbio (do-it-yourself) communities and frequently works with students and young entrepreneurs to guide their career and business development efforts. He has given hundreds of invited talks on synthetic biology to groups that include hollywood movie producers, the United Nations, and the FBI.

In an article published by the Council on Foreign Relations' Foreign Affairs magazine, David Relman and Marc Lipsitch examine recent advances in biological engineering as well as lapses in laboratory security in the context of biosafety and biosecurity concerns. The authors argue that current oversight is ill-equipped to handle the potential risks that can result from this type of research, and call for improved oversight mechanisms that involve diverse stakeholders to better govern these fields.

The H5N1 strain of the bird flu is a deadly virus that kills more than half of the people who catch it.

Fortunately, it’s not easily spread from person to person, and is usually contracted though close contact with infected birds.

But scientists in the Netherlands have genetically engineered a much more contagious airborne version of the virus that quickly spread among the ferrets they use as an experimental model for how the disease might be transmitted among humans.

And researchers from the University of Wisconsin-Madison used samples from the corpses of birds frozen in the Arctic to recreate a version of the virus similar to the one that killed an estimated 40 million people in the 1918 flu pandemic.

It’s experiments like these that make David Relman, a Stanford microbiologist and co-director of the Center for International Security and Cooperation, say it's time to create a better system for oversight of risky research before a man-made super virus escapes from the lab and causes the next global pandemic.

“The stakes are the health and welfare of much of the earth’s ecosystem,” said Relman.

“We need greater awareness of risk and a greater number of different kinds of tools for regulating the few experiments that are going to pose major risks to large populations of humans and animals and plants.”

Terrorists, rogue states or conventional military powers could also use the published results of experiments like these to create a deadly bioweapon.

“This is an issue of biosecurity, not just biosafety,” he said.

“It’s not simply the production of a new infectious agent, it’s the production of a blueprint for a new infectious agent that’s just as risky as the agent itself.”

But Relman cited a series of recent lapses at laboratories in the United States as evidence that accidents can and do happen.

“There have been a frightening number of accidents at the best laboratories in the United States with mishandling and escape of dangerous pathogens,” Relman said.

“There is no laboratory, there is no investigator, there is no system that is foolproof, and our best laboratories are not as safe as one would have thought.”

The Centers for Disease Control and Prevention (CDC) admitted last year that it had mishandled samples of Ebola during the recent outbreak, potentially exposing lab workers to the deadly disease.

In the same year, a CDC lab accidentally contaminated a mild strain of the bird flu virus with deadly H5N1 and mailed it to unsuspecting researchers.

And a 60 year-old vial of smallpox (the contagious virus that was effectively eradicated by a worldwide vaccination program) was discovered sitting in an unused storage room at a U.S. Food and Drug Administration lab.

Earlier this year, the U.S. Army accidentally shipped samples of live anthrax to hundreds of labs around the world.

Similar problems have been reported in labs around the world. The United Kingdom has had more than 100 mishaps in its high-containment labs in recent years.

It’s difficult to judge the full scope of the problem, because many lab accidents are underreported.

Studying viruses in the lab does bring important potential benefits, such as the promise of universal vaccines, as well as cheap and effective ways of developing new drugs and other kinds of alternative defenses against naturally occurring diseases.

“It’s a very tricky balancing act,” Relman said.

“We don’t want to simply shut down the work or impede it unnecessarily.”

However, there are safer ways to conduct research, such as using harmless “avirulent” versions of the virus that would not cause widespread death and injury if it infected the general public, Relman said.

Developing better tools for risk-benefit analysis to identify and mitigate potential dangers in the early stages of research would be another important step towards making biological experiments safer.

Closer cooperation among diverse stakeholders (including domain experts, government agencies, funding groups, governing organizations of scientists and the general public) is also needed in order to develop effective rules for oversight and regulation of dangerous experiments, both domestically and abroad.

“We believe that the solutions are going to have to involve a diverse group of actors that has not yet been brought together,” Relman said.

“We need new approaches for governance in the life sciences that allow for these kinds of considerations across the science community and the policy community.”

You can read more about Relman’s views on how to limit the risks of biological engineering in this article he wrote for Foreign Affairs with co-author with Marc Lipsitch, director of Harvard’s Center for Communicable Disease Dynamics.

Abstract: The threat of biological attack on the people of the United States and the world, whether intentional, natural or accidental, is of growing concern, both in spite of and because of significant technological advances over the past four decades. As a global leader, the United States needs a comprehensive policy approach for managing future attacks, which incorporates technologic elements from rapid detection through appropriate response. American and international responses to recent infectious disease outbreaks such as anthrax (intentional, accidental), H5N1 influenza (natural) and ebola (natural) have managed to contain these events ‐ with the paradoxical effect on policy makers, both political and administrative, of relief (“missed that bullet”, “we must be doing this right”), rather than serving as wake‐up calls. A challenge in merging technological solutions into policy lies in the rapid advances across the multiple sciences. Translation of these ongoing technologic advances for policy leaders is an essential element in effective policy development. Incorporation of technologic solutions into biosecurity policy construction, combined with motivated leadership, has the potential for enhancing future national and global responses to unprecedented biological attacks.

About the Speaker: Patrick J. Scannon, M.D., Ph.D. is XOMA's Company Founder, Executive Vice President, Chief Scientific Officer and a member of its Board of Directors. Since 1980, Dr. Scannon has directed the Company's product identification, evaluation and clinical testing programs for novel therapeutic monoclonal antibodies and proteins against infectious, oncologic, metabolic and immunologic diseases. As Chief Scientific Officer, he leads evaluations for new therapeutic antibody identification and discovery programs.

Dr. Scannon holds a Ph.D. in organic chemistry from the University of California, Berkeley and an M.D. from the Medical College of Georgia. He completed his medical internship and residency in internal medicine at the Letterman Army Medical Center in San Francisco. A board-certified internist, Dr. Scannon is also a member of the American College of Physicians. He is the inventor or co-inventor of several issued U.S. patents, and has published numerous scientific abstracts and papers.

Dr. Scannon has served as a member of the Research Committee for Infectious Diseases Society of America (IDSA), the National Biodefense Science Board (NBSB, a federal advisory board for the Department of Health and Human Services), the chair of the Chem/Bio Warfare Defense Panel for the Defense Threat Reduction Agency (DTRA) and a member of the Defense Sciences Research Council (DSRC, a research board for Defense Advanced Research Projects Agency (DARPA)). He has served as a Trustee of the University of California Berkeley Foundation and as a member of the University of California Berkeley Chancellor's Community Advisory Board. Dr. Scannon is currently on the Board of Directors of Pain Therapeutics, Inc.

Bioengineering researchers have recently constructed the final steps required to engineer yeast to manufacture opiates, including morphine and other medical drugs, from glucose, drawing significant interest, and concern, from the media and academics in the science and policy fields, including at the Center for International Security and Cooperation (CISAC).

“Researchers are getting better at building biology based platforms to create a wide variety of compounds that are difficult, inefficient, or sometimes impossible to create by other means,” Dr. Megan J. Palmer told National Public Radio in the weekly Science Friday segment.

She highlighted how these platforms can enable production of potentially safer, cheaper and more effective drugs. “But one significant concern is if we create the full pathway to go from glucose to this intermediate and then all the way to things like morphine, this could feed into illicit markets and bolster new illicit markets.”

The media this week has focused on comments by researchers who pointed out that the modified yeast could be used to manufacture heroin, a synthesized version of morphine. The prospect of “home-brewed heroin” has been prominently featured in news coverage.

There are significant concerns, says Palmer, but she cautioned that focusing solely on that possibility could lead to bad policy outcomes.

“There is a big opportunity for researchers, policy makers, and industry to work together to figure out what controls they can put in,” she said in a separate interview. “We have time to get ahead of this problem. We now have choices in how we build and regulate the technology. The challenge for regulatory and technical communities will be to avoid reactive quick fixes. It’s encouraging to see researchers engaging in these issues early on.”

The challenge will be to find ways for researchers, law enforcers, and policy experts to work together to build safeguards into the biology itself as well as into organizations and institutions.

“We really need to think about security as a design principle,” Palmer said. She hopes to foster thoughtful and rigorous analysis of how the design of biotechnology impacts future governance options.

“This issue highlights beautifully the nexus between public policy and science and technology, which is where CISAC has already, and will continue to make important contributions,” said CISAC Co-Director David Relman. Dr. Relman is also the Thomas C. and Joan M. Merigan Professor in the Departments of Medicine, and of Microbiology and Immunology at Stanford University.

CISAC recently hosted a seminar led by Stanford’s Dr. Christina Smolke that discussed technology advances that are resulting in alternative supply chains for drugs, with particular attention to opiates.

Dr. Smolke is also troubled by the over-emphasis of the risks associated with the potential technology. “I believe it’s inflammatory, biased, and not grounded in an accurate representation of the technology. However, the commentary focuses on the risks of the supply chain and proposes regulations/governance for such a technology, without implementing a process to engage various parties in discussions to thoughtfully assess risks, opportunities, and regulatory needs in this context.”

“I think we need to frame this issue in the context of the larger systemic challenges involving the rearrangements of supply chains enabled through bio-manufacturing and how we spread responsible norms and practices,” Palmer said. “We need to think about governance options in terms of human capacities and technical capacities. What safeguards can we engineer into our technologies, and in turn what safeguards can we build into our organizations and institutions?”

Abstract: Recent advances in synthetic biology are transforming our capacities to make things with biology. This bio-based manufacturing technology has the potential to be most disruptive around products for which existing material supply chains result in limited access. For example, broad access to medicines and the development of new medicines has been difficult to achieve, largely due to the coupling between material supply chains and these therapeutic compounds. We are developing a biotechnology platform that will allow us to replace current supply chains for already approved medicines with stable, secure, scalable, distributed, and economical microbial fermentation. Our initial target is the opioids, an essential class of medicines for pain management and palliative care, which are currently sourced through opium poppy cultivation. In addition, we will leverage this technology to access novel compound structural space that will open up tremendous opportunity for transforming the discovery and development of new drugs over a longer-time frame.

About the Speaker: Christina D. Smolke is an Associate Professor, Associate Chair of Education, and W.M. Keck Foundation Faculty Scholar in the Department of Bioengineering and, by courtesy, Chemical Engineering at Stanford University. Christina’s research program develops foundational tools that drive transformative advances in our ability to engineering biology. For example, her group has led the development of a novel class of biological I/O devices, fundamentally changing how we interact with and program biology. Her group uses these tools to drive transformative advances in diverse areas such as cellular therapies and natural product biosynthesis and drug discovery. Christina is an inventor on over 15 patents and her research program has been honored with numerous awards, including the NIH Director’s Pioneer Award, WTN Award in Biotechnology, and TR35 Award.

Encina Hall (2nd floor)